Shaping the c̶u̶r̶v̶e culture

Designing KPIs to foster deep dive work

Teams consistently discussing their problem space in depth over long periods tend to achieve robust, long-lasting products and excellence. This work behavior is sought after by leaders and referred to as a deep dive culture. There is much good content on how to develop such a cultural trait.

The Working Backwards book, by two ex-Amazon executives, made waves as it illustrated how Amazon built a deep dive culture using metrics, review processes, and communication schemas. The book coined some valuable concepts for the business world, such as input metrics (controllable metrics that can be directly associated with actions) and output metrics (higher abstraction metrics that represent results or outcomes for the business). The book makes a clear case for how working with input metrics is better and discusses how to navigate selecting the proper input metrics to work with.

A fascinating insight is that you can design input metrics to make the deep dive culture easier to attain.

A paradigm shift: opportunity-oriented metrics

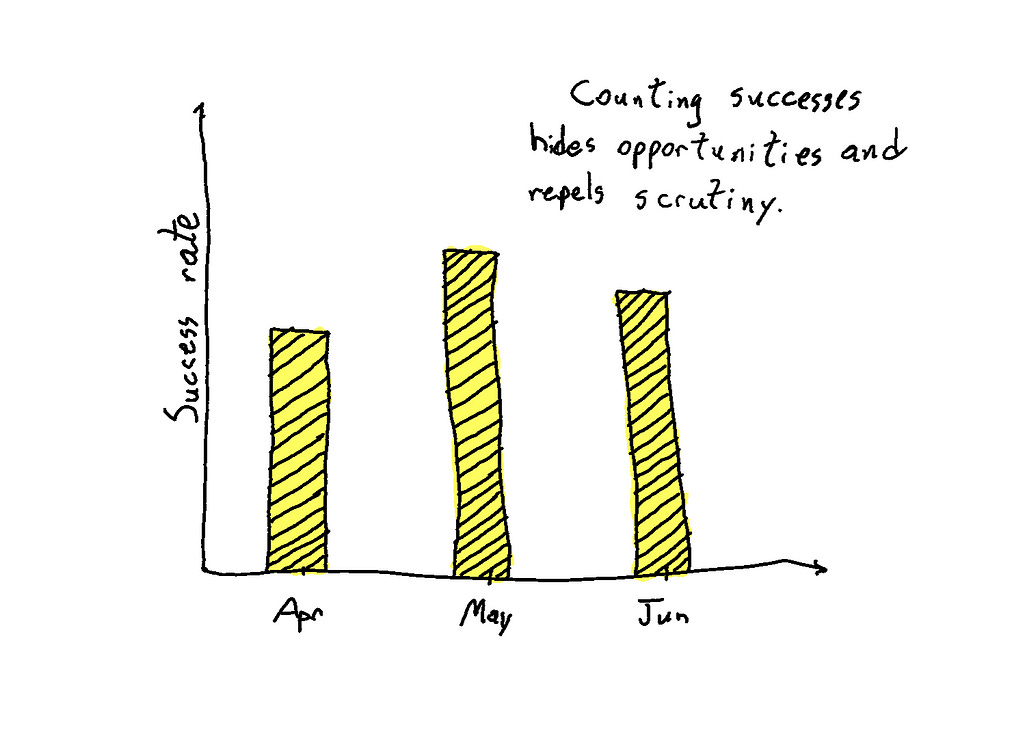

Metrics (input or output) often compute accomplishments and communicate a level of success achieved by the organization. Most well-known metrics (let's call them motivational metrics) follow this pattern: monthly active users, conversion rate, monthly recurring revenue, and page views are some examples.

As teams work and communicate using motivational metrics, there are layers of indirection between their analytical work and the information about where the opportunities for improvement lie. Fundamentally, when you decompose motivational metrics to their units (when you list their computed instances), you see examples of solved problems.

One of the most striking traits of a deep dive culture is that teams scrutinize problems to their root cause and often pick a few concrete examples of opportunities as favorites to expose in discussions or test solutions. You facilitate deep dive work when you design metrics to compute opportunities or unsolved problems (let's call them opportunity-oriented metrics). Abandoned carts, funnel drop rate, and recurring revenue churn are famous metrics computed that way.

Designing metrics for a deep-dive culture

So, what happens when you take opportunities as input?

Clear definition of which opportunities are relevant

Priorities are a vital component of any good corporate strategy and communication. Only a fraction of opportunities for a well-run organization deserve attention at any given moment.

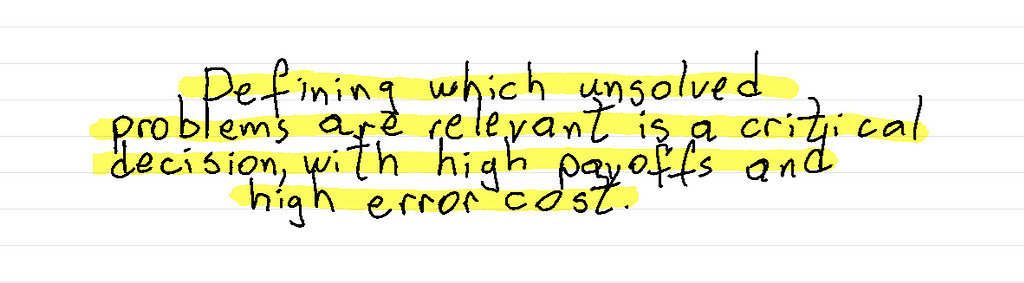

Depending on the corporate focus, some non-successful events could be out of scope and better left aside. When a metric computes unsolved problems instead of accomplishments, its definition communicates what should be considered a meaningful opportunity for the organization.

For example, assume a parcel delivery company's focus is to decrease delayed deliveries. Computing all failed delivery attempts would include attempts that failed before the promised date (and therefore did not impact delayed deliveries, the focus). The company could take only failed delivery attempts at the promised date or later as input, thus declaring that early delivery attempts are out of scope.

An important caveat is that computing unsolved problems defines the problems or dimensions that matter. It is a critical decision, often influencing the direction of an organization more than the product itself. And as with any other product decision, it may be incorrect. If the most impactful solution is in a cross-dimension, you may become utterly blind to it. The authors of Working Backwards refer to this as the tricky trial and error part. Incorporating a process that regularly takes a step back to explore the unfiltered set of non-successful events is prudent.

Concrete opportunity examples are easier to access

In a deep-dive culture, it's critical to have zero friction in accessing opportunities to scrutinize. A fresh list of relevant opportunity instances should be trivial to access by everyone. This tip is the most important insight of the article.

Let's take the space of online user acquisition funnels as an example. The typical motivational metric would be conversion rate: a count of user sessions that reached the bottom of the funnel in proportion to all user sessions. The computed units would be successful user sessions.

While drawing insights from successful user sessions is a viable approach, keeping the teams focused on examples of abandoned user sessions would likely produce a more impacting roadmap over time.

The opportunity-oriented version of the conversion rate could be the share of abandoned sessions among the subset of the market the organization could be focused on at the time — like the share of abandoned sessions for English native speakers between 21 and 30 years old. The computed units would be examples of users the funnel failed to convert, ready for analysis.

It could be as simple as a dashboard tool that exports a spreadsheet file with the computed instances.

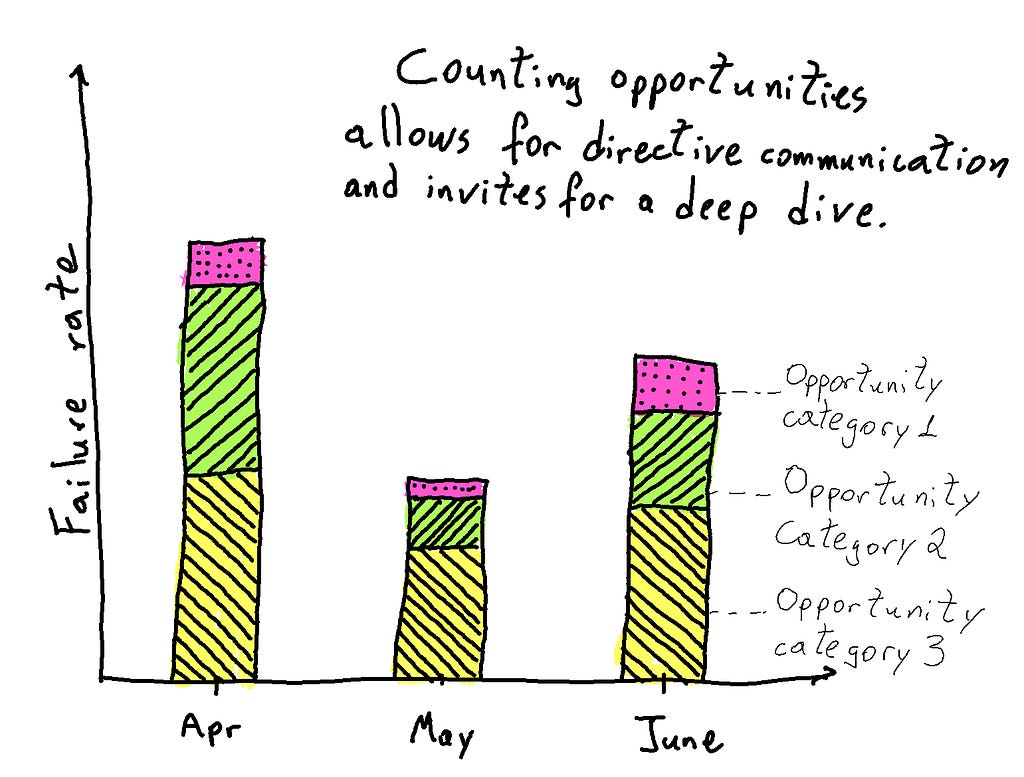

Communicating categories of opportunities

When counting instances of unsolved problems, you'll have a chance to classify them. Moreover, it's possible to implement simple classification rules to group instances in valuable ways.

For example, in the context of user engagement (think Monthly Inactive Users), one could group users based on how long ago their last interaction was or group them by the feature they use more frequently or by user acquisition channel. The organization could communicate the same metric in charts with different classification criteria for a broader perspective.

The obvious benefit from communicating opportunities grouped in categories is that the most impactful classes of opportunities to address become public upfront information instead of the result of an eventual analysis. As a result, teams will have a clearer perspective about where the highest potential yields lie.

A not-so-obvious insight is that naming the categories in specific ways can drive more engagement and promote a general sense of ownership. Considering the organization structure and gearing the names of the categories of opportunities towards the scope of specific teams help them focus and feel responsible.

For example, suppose the subject is a chain of processes, with teams organized into groups responsible for each chain step. In that case, one can name categories about the first step where the failure was identified (such as "Delayed at the cross-docking facility").

Dashboards quickly evolve into diagnostic tools

Once there has been an investment into defining the relevant problems to tackle and implementing simple rules to group failure instances into categories, the core components of a diagnostic tool are ready.

Besides applying these rules for consolidating information for charts and KPIs communication, it could be possible to reuse the definitions in internal tools for debugging or customer success operations, democratizing the ability to diagnose failure instances within the organization.

Bonus: define a unit that can evolve with the company

As mentioned earlier, determining what the metric considers relevant events is a powerful way to focus the organization. As the problem space evolves, the organization's focus will change. There may have been good progress in the previous direction, and it will want to tap into a different class of opportunities, or it may have found a solid product-market fit and want to go deeper into one of many classes of opportunities considered earlier.

Much effort goes into communicating a new KPI if you're doing it right. And if it sticks, references and dependencies will unfold throughout the organization.

Consider naming the input instances as units (they will likely end up sharing the name of the KPI). When you name a unit, you can evolve its definition over time without compromising its ecosystem and communication framework. But, of course, backward compatibility would be compromised.

For example, assume a sales organization is looking for ways to improve the last step of the sales funnel for its core revenue stream. It defines a KPI for missed sales opportunities while leaving out developing revenue streams that still need development.

Fast forward a year, and now one of the developing revenue streams has matured and deserves just as much exposure and focus. Having two separate KPIs might not be ideal, as one would need to communicate heavily to introduce the new KPI and then wait for the ecosystem to catch up while the first KPI continues to get all the attention.

If, at the first moment, the organization named the opportunity instances as Near Miss Sales (NMS for short), the newer and expanded focus at the second moment could tag along with all that was built around NMS. The organization could then communicate an update on the direction and that the KPI now reflects this new scenario.

Loggi, a tech startup for express parcel delivery, was growing very quickly, and its operations were starting to feel the burden of hypergrowth from a quality of service perspective. Logistics processes are arduous to mature when growing at +3x YoY.

Our only quality metric was SLA, typically used for external client communication. Due to its contractual binding nature, SLA pivots on delivery attempts, not consummated deliveries. For example, contracts stated that 98% of all parcels collected from a client had to have a delivery attempt happen at least once until their promised date.

Loggi chartered Pedro Passarelli, Lucas P. C. Branco, and I with leading the work on quality improvement. Initially, leadership expected us to work on a diagnostic so that teams could prioritize specific improvement opportunities.

As we looked at examples of delayed parcel deliveries, we understood that this was a rich exercise and that we should look for ways to enable everyone in the company to do it routinely. It also struck us that we were not dealing with atypical problems and that there were probably standard metrics to support us. So we decided to study (check out a previous article about studying at work) and found an excellent 1994 MIT paper about logistics metrics. We gathered a few compelling concepts from this study:

Behavioral soundness discusses that metrics shape how the organization thinks and behaves and must be thought out and communicated to incentivize the expected behavior. As we went through examples of non-delivered parcels, we could detect some ineffective operations driven by the delivery attempt based on SLA KPI. Over time, people tend to play a game against their target metric.

Internally focused diagnostic metrics. This was a critical confirmation in favor of our feeling that SLA was not a useful KPI to drive an increase in quality of service as it was too conservative and targeted to an external audience. The idea of defining acceptable standards to describe perfect deliveries was under discussion, but the fact that non-adherence to standards could seamlessly become diagnostic information was a breakthrough.

Metric scale can affect behavior, and percentages are inadequate for excellence expectations when 0.1% matters to the business.

Contrary to what I expected when going after literature on the topic, I concluded that we needed to define our internally focused diagnostic metric to promote the company's desired quality of service culture.

Defining acceptable standards

We described our perfect deliveries and concluded that the parcels were delivered on time and at the first delivery attempt. So, we looked at examples of packages that did not match a perfect delivery, and they all described relevant improvement opportunities. We were thrilled.

Next, we tried to define our acceptable standards at a higher resolution, thinking of rules such as:

The time of day a parcel should have left a delivery station for last-mile delivery to be considered on time.

The time of day a parcel should have left the cross-docking facility towards the delivery station to be considered on time.

The various reasons why delivery attempts failed on the last mile.

Some rules ended up being approximations due to the lack of intermediate data. Establishing the rules as priors to the following phases and grouping examples by the first standard non-adherence along the chain started to surface examples grouped by classes of operational errors. Finally, we seemed to be onto something.

Communicating a diagnostic

We understood that naming the non-adherence groups in a directive and somewhat judgemental way mattered as it would call for ownership and action. It took us many iterations to get it the way we wanted. For the groups before the last mile, we followed the naming template "failed at cross-docking," "failed at the delivery station," etc.

We were on the right track when we presented the metric, and operation managers wanted to look at the examples and even felt uncomfortable with some of the conclusions, considering the diagnostic a bit unfair. However, if we communicated that way, they would care.

Setting the bar for excellence

When we started putting it all together, something felt off as some relevant classes of opportunities were reading, like 0.6%. In some cases, we were dealing with complex problems with already developed solutions, such as incentives to drivers for delivery consummation. We expected teams to improve on them by 10% over a year, which could mean a change of 0.06pp in the metric. Motivating people to diligently pursue that relatively small contribution for a long time seemed challenging.

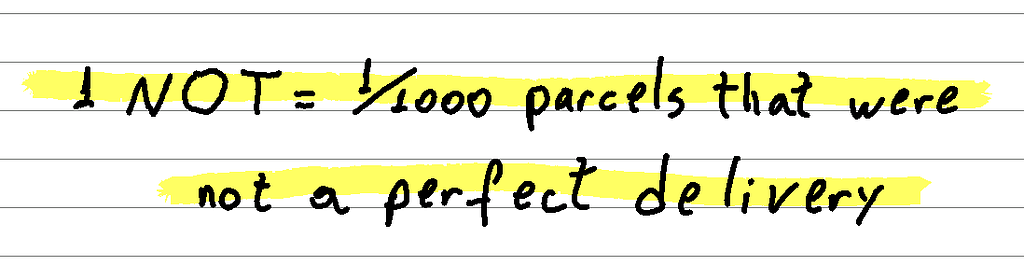

We took the cue on the scale remark and communicated the metric proportionately to 1000 instead of 100. But, even though everyone understood %, we had no good way of communicating per thousand other than defining a new unit.

Wrapping it all up and shaping the culture

This new metric became a product in itself. So we put much effort into communicating it, from defining the unit, the classes of opportunities, and the dashboard (what goes above the fold and below, etc.).

We named the unit NOT, which is short for Not On Time. The catchy name helped a lot in getting traction.

As we went around the company communicating and working with teams on using NOT, we got a lot of traction and engagement. Operations leadership demanded deep-dive analysis, and teams suddenly had a practical tool and a communication framework to support them.

Quality has improved consistently since then, with observable impact (global NOT improved by +30% on an excellence grade problem space). We could declare which initiatives were promising or not beforehand, point out regional-specific quality challenges, and rally the whole company towards actionable goals with little communication effort.

Years later, we learned that using a perfect delivery as the target was no longer valuable. Specific aspects of our operations needed more attention and exposure, such as the recovery process after a failed delivery attempt. In retrospect, defining a unit was a great idea. As the focus and maturity of the company evolved, we adjusted what the unit accounted for, taking advantage of the previous communication framework: OKRs, governance rituals, and training materials; everything that referred to NOT as the Northstar quality metric still made sense, even though what the unit represented evolved. (Hat tip to Natacha Alencar, who helped us on NOT 2.0)

I did not see it coming, but NOT was one of the most robust pieces of work I have ever accomplished. Everything in the environment was favorable (team, leadership, problem space), and I'm thankful to all. Also, I hope that what we've learned can be applied elsewhere.

Shaping the c̶u̶r̶v̶e culture was originally published in Desirable Difficulty on Medium, where people are continuing the conversation by highlighting and responding to this story.